Regulators as Builders: The Agent Mystery Shopper

An example of how regulators can use AI to build tools to combat fraud

Yesterday I built a prototype Regulatory Mystery Shopper Toolkit. This post traces how. The majority of the build was done in about an hour.

Context

I was recently at a conference co-hosted by the Securities and Exchange Board of India (SEBI). One of the topics discussed was digital fraud and the increase in scam sites.

The discussion made me think of mystery shopping. Mystery shopping is when a regulator poses as a real world customer and seeks financial services from firms to evaluate, for instance, compliance with fair lending laws and regs. Mystery shopping can be effective, but it is labor intensive and the resulting sample sizes tend to be fairly small.

I wondered if an AI agent could be built to conduct mystery shopping at scale to ferret out scam sites.

Research

I asked GPT5.2:

In terms of battling modern day financial scams and fraud, can AI agents be used by the regualtory authorities to do “mystery shopping” to ferret out fraudulent websites, etc.?

It answered in the affirmative, noting that digital mystery shopping amounts to “automated websweeps + undercover user journeys to surface scam websites, impersonation pages, and illegal financial promotions at much higher scale than human teams alone.”

It highlighted related efforts by other jurisdictions, e.g., UK FCA, FINRA, and IOSCO, then provided a structured step-by-step of “what AI-agent mystery shopping looks like in practice”, followed by highlights of “the hard parts” regarding legal, operational, and model risk considerations.

It asked if I wanted a formal report. I said yes. It is attached here and is worth checking out in full:

Planning

Continuing the conversation, I then asked it:

draft a prompt for Codex to develop a prototype set of tools comprising the MVP described above. i would like to eventually post this to GitHub for others to evaluate and critique

As background, Codex is OpenAI’s coding assistant, analogous to Anthropic’s Claude Code. Coding assistants are different than AI chatbots in that they can take actions on your computer and build things, not just search the web and respond to queries. (I wanted to try Codex to compare it to Claude Code. Gemini also has a coding assistant called Gemini CLI.)

While coding assistants can take instructions in natural language, I’ve found it helpful to converse, research, and plan via a chatbot interface and to ask it to create a prompt for a coding assistant, which then does the building. This has led to cleaner builds and fewer bugs for me.

The full prompt generated by GPT5.2 is here. Non-technical readers may find the structure and level of detail interesting:

Building

I ended up using Claude Code because Codex kept crashing on my laptop. I pasted the prompt from above into the CLI version of Claude Code. It then began building.

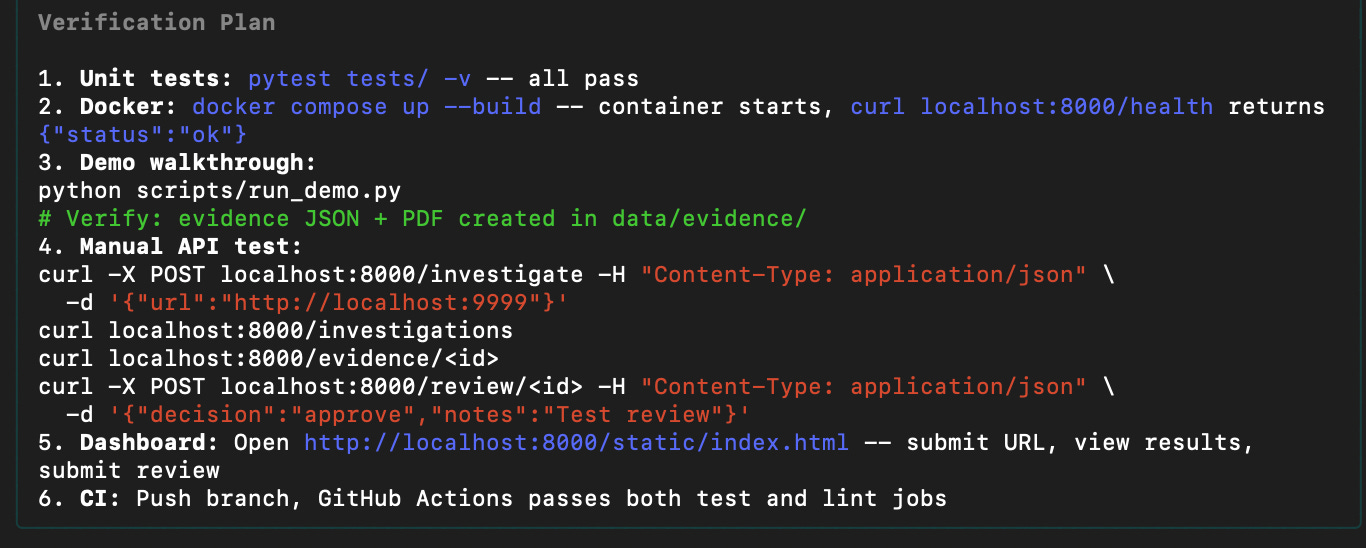

Importantly, the prompt that GPT5.2 had generated was comprehensive and included architecture/full stack specs, security and safeguards, legal and policy documentation, and a suite of testing requirements. As a non-coder, I cannot tell how robust these are, but the structure and requirements were familiar to Claude Code and the build unfolded smoothly.

In the end, Claude Code created an extensive set of files and created a public GitHub repo for those interested in giving it a spin or improving it.

So what?

In my last post on the new AI jobs in supervision, I noted that there will be a role for builders as regulatory agencies adopt AI. My hope is that this post provides a concrete example of what that means. On my own, I was able to spin up a prototype of an agentic-AI mystery shopper to help combat digital fraud. Despite lacking coding experience, I was able to do this in about an hour.

Fraud is on the rise, in part because AI is making it easier to commit. This quick experiment shows that regulators can fight fire with fire if they can embrace AI tools and inspire their staff to build with them.